Road safety is a major concern for transport authorities, pedestrians and vehicle drivers.Advanced driver assistance system (ADAS) solutions were developed to provide safety and a better driving experience.

Around 1.3 million people die each year as a result of road traffic crashes, according to the statistics from the World Health Organization (WHO). It also indicated that road traffic crashes are expected to become the seventh leading cause of death by 2030. Hence, worldwide governments are setting mandatory regulations which are increasing the use of applications such as advanced driver assistance systems (ADAS) to reduce the risk of traffic accidents. Matthew Preyss, Product Marketing Manager for Highly Automated Driving at

HERE Technologies, indicated that both the European (Euro NCAP) and the United States New Car Assessment Program (US NCAP) are driving OEMs to adopt ADAS.

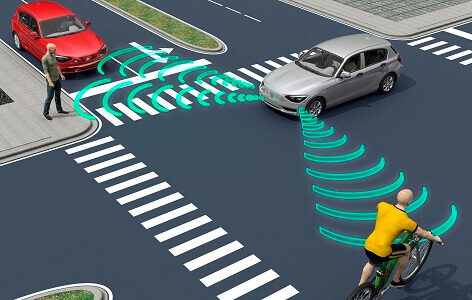

ADAS uses devices such as cameras, radar, LIDAR and ultrasonic as sensors to detect near and far fields in different directions to provide driving assistance. Blind spots, traffic signs, obstacles and distances can be detected, ensuring safe driving and assistance in parking. Also, the system can warn the driver in a timely manner to prevent traffic accidents or suggest alternative routes to avoid traffic.

Jeremy Carlson,

Jeremy Carlson,

Principal Analyst,

Autonomous Driving and Mobility,

IHS Markit

The proliferation of ADAS is expected to boost the installation of sensors and cameras. However, this is also challenging systems to promptly understand and analyze the massive amounts of raw and unorganized data being collected in this dynamic traffic environment. Jeremy Carlson, Principal Analyst of Autonomous Driving and Mobility at

IHS Markit, indicated that machine learning can make that process more efficient, thereby facilitating faster development and deployment of the technologies to consumers on the road.

Jeff VanWashenova, Automotive Segment Marketing Director at

CEVA, said, ”It is expected that more and more vehicles will have standard rear back up cameras, front cameras for collision avoidance, and even replacing rear view and side view mirrors with cameras. These sensors need to be able to improve image quality and add additional analytics like object detection and identification.” He believes machine learning can help improve both accuracy and speed at which these systems can identify objects.

ADAS can become a thinking machine with human-like intelligence. Preyss indicated that machine learning can leverage vehicle sensors and map data to provide context to the vehicles environment for better and more proactive decisions. “Learning the behavior of the driver can help to enhance the execution of ADAS features,” he added.

Aish Dubey,

Aish Dubey,

ADAS Business Manager,

Texas Instruments

Enhancement from Machine Learning

Machine learning helps ADAS to detect and recognize objects with improved accuracy and reliability. The technology is known for its excellent performance in image classification that can distinguish objects like pedestrians, bicycles or vehicles. Also, the machine learning algorithm can help ADAS recognize road signs more precisely like speed limit recognition.

This is gaining traction in ADAS applications due to continuous developments and improvements in machine learning algorithms. Aish Dubey, ADAS Business Manager for

Texas Instruments (TI), indicated that the accuracy of machine learning algorithms has improved quite a bit in the last three years.

Compared to older classical vision algorithms, the improvements allow video data to be understood and interpreted at a much higher level of resolution. He explained, “It leads to new applications where the areas around the vehicle can be classified as ‘available for driving’ or ‘occupied by an obstacle if a certain type’ more reliably, helping to prevent dangerous driving situations.” He expects this improvement can enable safer vehicle operation at autonomous SAE levels 3 and higher on highway scenarios very soon.

Matthew Preyss,

Matthew Preyss,

Product Marketing Manager,

Highly Automated Driving,

HERE Technologies

Some of the active safety features such as collision avoidance, automatic emergency braking, blind spot detection and lane departure assistance are currently based on traditional vision algorithms. VanWashenova indicated, however, that machine learning is a more robust method that is well suited for the harsh automotive environment and can deliver on the performance needed in ADAS systems. He takes traffic sign recognition as an example. “Machine learning based systems can effectively identify signs that may be obstructed, rotated or modified, and can be trained to handle many of the variables found in real-life applications,” he explained.

When asked about enhanced features from machine learning in ADAS, Carlson indicated that these are primarily oriented towards improvements in accuracy rather than brand new functionalities. “Automatic emergency braking systems already exist and are quite functional today, but they can be improved to better recognize pedestrians, cyclists or animals in more varied poses,” said Carlson.

Through the use of sensors and cameras, ADAS can identify pedestrians, bicycles and vehicles accurately to prevent traffic accidents.

Through the use of sensors and cameras, ADAS can identify pedestrians, bicycles and vehicles accurately to prevent traffic accidents.

Driver State Monitoring

ADAS is not just for monitoring the roads ahead, but also for monitoring drivers. Drowsy and distracted driving are key reasons for car crashes. To prevent this, inward-facing cameras can be used to track a driver’s gazing direction and head position. If the system detects that the driver is not looking straight ahead while driving, it will alert him to do so.

Sometimes, clues appear in a drivers’ behavior or biological patterns rather than in their face or eye movements. Mitsubishi Electric uses machine learning algorithms to detect absent-mindedness and other cognitive distractions in drivers when their vehicles are traveling straight. The company uses the algorithm to analyze time-series data, including information about the vehicle like steering and driver’s heart rate and facial orientation. The technology can predict appropriate driver actions in real time by using a combination of data on normal driving and time-series data on the actual vehicle and driver. When the technology find the driver’s action differ from the algorithm-based prediction of appropriate driving action, the driver will be immediately alerted.

Jeff VanWashenova,

Jeff VanWashenova,

Automotive Segment Marketing Director,

CEVA

When it comes to adoption of those complex machine learning algorithms, heavy computation and costs are two major issues for widespread adoption to all levels of car ownership. Striking a balance between cost and performance has always been essential.

Dubey indicated, “ADAS technology will continue to differentiate automakers and influence buying decisions. Automakers need scalable technology that can enhance the customer experience at all levels of car ownership from economy to premium cars. It is critical to offer ADAS hardware and software technology at cost points that allow the markets to serve most customers.”

VanWashenova said, “Automakers want to introduce more features that will take advantage of smart sensors found throughout the vehicle. These smart sensors will need to utilize machine learning algorithms which are developed on much larger and power hungry computing platforms. When these systems will be deployed in high volumes, cost-effective and power efficient solutions are needed.”

There has been a growing interest in installing ADAS into new cars around the world to increase driving safety and comfort. Machine learning is believed to enhance ADAS performance as a kind of differentiator. No matter what kind of technology is applied, it’s vital to keep roads safer and reduce traffic accidents to ensure every driver and pedestrians go home safe.