We put video analytics to the test in an effort to sort fact from fiction. A&S partnered with Australian integrator VideoControlRoom for its first Intelligent Video Shootout. Michael Brown, MD of VideoControlRoom, describes installation challenges, environmental factors, and what makes an intelligent video solution stand out.

We put video analytics to the test in an effort to sort fact from fiction. A&S partnered with Australian integrator VideoControlRoom for its first Intelligent Video Shootout. Michael Brown, MD of VideoControlRoom, describes installation challenges, environmental factors, and what makes an intelligent video solution stand out.

When VideoControlRoom set out to evaluate intelligent video, we did not budget enough time. Setting up these systems, understanding different algorithms and using tools and terminology from multiple vendors are a huge job.

Only four vendors out of dozens participated in this challenge. After testing these systems, we understand that in the wrong hands, it is easy to botch a deployment and produce poor results.

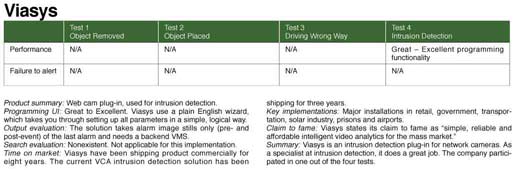

We applaud the willingness of the participating vendors to take that risk, as well as recognizing the benefits of open testing. The test subjects were Aimetis, IntelliVision, Viasys and VideoIQ.

The lesson learned from this test was intelligent video systems (IVS) are not plug-and-play devices. They take training, testing and commitment to get results. This can be thrown out of balance by getting the programming wrong, throwing in harsh variables or setting up imperfect fields of view (FOV).

VideoControlRoom took a different tack from our previous intrusion detector challenge by producing generalized results. The point of the shootout was to evaluate IVS, seeing how well they performed assigned tasks and at what cost.

Test Setup

We set up two locations with four network cameras each: Three Axis P1344 cameras and the VideoIQ VIQ-CT216 combination camera, NVR and video content analytics (VCA).

We set up two locations with four network cameras each: Three Axis P1344 cameras and the VideoIQ VIQ-CT216 combination camera, NVR and video content analytics (VCA).

The cameras at each test location were set up to have the same FOV. The cameras were tuned with a laptop wirelessly, displaying the cameras' digital streams. All cameras incorporated PoE, so we ran four Cat-5E cables to the mounting locations.

The first location was inside a warehouse to test object removal. This was used for high-value stock items.

Its daytime lighting is bright, with high bay sodium vapor lights and natural light via clear-roof paneling. At night, lighting is dark or well-lit with the high bay illumination.

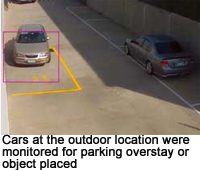

The second location was outdoors overlooking a loading bay. This would monitor for objects placed, as well as driving the wrong way and intrusion detection. In the day, this location is lit by sunshine, while at night it had no light or good halogen illumination.

For processing, two of the tested systems ran server-side as software on Windows systems. The other two systems ran on the edge in cameras. For server-side processing, we used Dual Core P4 Systems running Windows XP Pro, each with 4 gigabytes of RAM.

System architecture for edge or server is another discussion. Edge processing can provide network optimization, while server deployments offer system management consolidation and other benefits.

Cameras

Cameras

Axis Communications kindly lent us eight P1344 HDTV network cameras. Supported by our analytics vendors, the Axis cameras were a snap to set up. The PoE cameras patched in, booted up and connected to a DHCP-assigned IP address automatically.

This made locating the cameras a snap, which listed both MAC and IP addresses. Simply run the provided Axis IPUtility and the application lists all Axis cameras discovered on your network.

The Axis cameras were rated 0.05 Lux B/W, making them an excellent platform for VCA, providing clear and crisp pictures around the clock.

Lighting

VideoControlRoom has been monitoring IVS 24/7/365 for remote intrusion detection for more than five years, so we understood the impact of lighting on VCA.

Lighting can potentially wreak havoc on intelligent video. If it is dark and you cannot see what is going on, do not expect your IVS to, either.

With good light and a well-framed camera, it was possible to get on or near 100 percent accurate alarm results from all systems. Lighting is a big hidden cost for using intelligent video as an intrusion detection system.

With good light and a well-framed camera, it was possible to get on or near 100 percent accurate alarm results from all systems. Lighting is a big hidden cost for using intelligent video as an intrusion detection system.

One alternative is thermal cameras. However, they are more expensive and may not suit other uses.

Perspective

Perspective, or the camera's angle, FOV and plane, is critical. It is easier for VCA to count people with a camera directly over head.

To catch someone breaking in, it is essential to position the camera so the individual is traversing the FOV horizontally. Higher angles work best, as well as designating zones of interest. The smaller the object, the harder it will be to track.

Testing Algorithms

Algorithm: Object Removed

Application: Sensitive stock

Object removed is an ideal algorithm for protecting high value assets. For this application, you can mount the camera to get an perpetually unblocked view of the protected asset.

Our testing showed smart video works and is ready today, with all vendors performing well.

Our testing showed smart video works and is ready today, with all vendors performing well.

Object removed is not useful for daily stock protection use unless you are targeting specific stationary assets. For tracking frequently moving stock, you might be better off with a free post activity tracking solution based on conventional video motion detection.

Algorithm: Object Placed

Application: Loading bay parking overstay

This is a handy VCA application with numerous real-world uses, such as protecting parking areas for overstay limit — a potential source of revenue. Object placement can make sure containers are stacked in the right place or check for items falling off a production line. Our tests show this application is also commercially viable, with all vendors performing well.

In this test, we used the cameras to cover a loading bay. The test detected whether a vehicle parked for more than 15 minutes. In the real world, parking overstay has a number of applications that could justify its cost.

Accuracy was high for this test. Vehicles, the test object of detection, are probably the easiest thing for VCA to recognize, since the shape is large and differentiated from objects like people and animals.

All the products struggled with bad light for this test. The loading bay was adjacent to a roller door, which cast a bright light on the bay area when opened at night. When the outside lights were off, none of the VCA systems detected parking overstay.

Algorithm: Directional Detection

Application: Driving the wrong way

Based on our testing, this proved to be another mature VCA application. However, installers must pay attention to camera perspective. In our test, we looked down a driveway instead of across it, which didn't produce great results. A horizontal line gets alerts from objects that appear to have crossed the line, but have in fact only appeared to, due to the camera's perspective.

Algorithm: Intrusion Detection

Application: Yard area protection

Intrusion detection appears to be one VCA application that is gaining traction commercially. It can create a virtual fence for video verification of intrusion without outdoor detection. While you might save on outdoor detectors, watch for a blowout during testing, which must be done in daytime and nighttime conditions. With VCA intrusion detection, you are vulnerable to false alarming the second a large spider makes the camera its home. For this reason, do not use IR cameras outdoors, since they are spider magnets.

Intrusion detection appears to be one VCA application that is gaining traction commercially. It can create a virtual fence for video verification of intrusion without outdoor detection. While you might save on outdoor detectors, watch for a blowout during testing, which must be done in daytime and nighttime conditions. With VCA intrusion detection, you are vulnerable to false alarming the second a large spider makes the camera its home. For this reason, do not use IR cameras outdoors, since they are spider magnets.

Bright lights should not be located too close to the camera. Small bugs lit up by ambient light can appear very large and will trigger alarms.

Be prepared for ongoing tuning, camera cleaning and an annual test to keep VCA intrusion detection reliable. Any smart video vendor who tells you otherwise doesn't live in the real world.

Implementation Cost

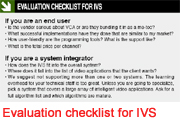

Examining cost is important, as not all are obvious, and could result in cost overrun. A budget should include:

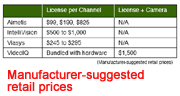

* Cost of camera channels, including DVRs, NVRs, cameras: This depends on the camera specs server specs and wiring. Budget US$1,000 to $2,000 per channel, depending on cameras and servers.

* Cost of algorithm license: A 16-camera system is not likely to require 16 channels of smart video. The factory floor and loading dock might need it, but not the hallway. If the vendor's licensing model is not flexible, there is the potential to pay for unused smart video channels.

* Cost to program: Programming could be a few dollars up to several hundred per smart video channel, on top of the camera. It is critical you invest in training for your staff to avoid learning curve cost overruns.

* Cost to test: Walk testing is critical. It is easy to assume you have something programmed properly. It is not until you test your setup that you know the programming is spot on. Walk testing is a potentially big hidden cost, which gets larger with more variables in your camera's environment. For example, walk testing at night may require paying technicians overtime.

* Cost of event monitoring: Event monitoring is another consideration. Once you have set up all this technology, who will look at the output and at what cost?

The more false alarms, the more labor will be involved in event management. The ideal scenario would be for the person receiving events to also have programming skills. This way, the system is fine-tuned over time, avoiding “the boy who cried wolf” scenarios.

The costs of VCA need to be added up. Either the asset or risk has to be of greater value than the cost of the implementation, or else you may spend a thousand to save a hundred.

Our experience is the labor involved in initial setup, walk testing and responding to alarms — as well as ongoing tuning and maintenance — has the potential to far outweigh the cost of the algorithm license. Successful IVS are going to be user-friendly.Breaking down licensing costs per channel is not clear cut. VideoIQ bundles the VCA technology license into the cost of a camera, while Aimetis includes VCA in a full Video Management Suite that varies in cost, depending on how many algorithms are needed. Viasys licenses VCA per camera, but has a lower cost for server-side or centralized processing. To get an exact handle on license costs, communicate with each vendor directly.

How does IVS fit?

An end user deploys a video surveillance system for many reasons; VCA could be at the top of that list or somewhere in the middle. This will impact your ability to deploy the technology and get results. Common video tasks include recording and playback; remote viewing; VCA and remote video verification.

An end user deploys a video surveillance system for many reasons; VCA could be at the top of that list or somewhere in the middle. This will impact your ability to deploy the technology and get results. Common video tasks include recording and playback; remote viewing; VCA and remote video verification.

There are two types of IVS installations. The first is where VCA is the primary reason for a video installation. Cameras will be rolled out specifically to count people or watch for parking violations. This is ideal, as you can frame the FOV for your IVS application and will get solid results.

The second is where VCA is down the list or not required on initial setup. Users don't know what they want to do with smart video, but understand it may come in handy. Cameras are set up for the primary tasks, with smart video added later.

These installations require a DVR, NVR or a network camera capable of running algorithms. Installers can remotely deploy intelligent video to the existing infrastructure, or drive to the site.

FOV is important. All manufacturers require objects to take up a certain percentage of the FOV for tracking.

For these installations, it will be difficult to get accurate results, especially with a camera that is not ideally located or framed. PTZ cameras can be zoomed in to just the right spot, suiting analytics applications after the initial installation.

For these installations, it will be difficult to get accurate results, especially with a camera that is not ideally located or framed. PTZ cameras can be zoomed in to just the right spot, suiting analytics applications after the initial installation.

Compromise is always a risk with the second type of deployment. The best thing is to be upfront with clients. If they want a camera to read license plates and count vehicles, one or both applications will suffer.

Installers can frame a camera to perform one or more tasks, but it will only outperform on the primary task. Primary tasks could be identification, verification or IVS applications where the camera is specifically framed for video analysis.

Summary

Judging products is subjective, with different answers depending on your background, training and time frame. VideoControlRoom provides remote video services, so we prefer systems that are manageable on a commercial scale and that suit mass deployment. What works for us might not work for you, so we encourage you to perform your own trials before committing to any technology. We hope this article provides a framework for testing.

This challenge has proven "shootout" is the wrong thinking, After our latest technology evaluation, future shootouts should be recast as "technology road tests." It isn't fair to name one a winner, as it is up to the market to weigh performance cost and application suitability.

The reality is we rely on humans to set equipment up, who make mistakes. These real-world factors are where IVS suffers. It will work best in well-lit, indoor, insect-free, dust-free, well-maintained and application-specific camera installation.

IVS needs to be a collaboration between all the players to be successful. Users should make sure there are good lines of communication between all parties. Everyone should be trained, and support should flow freely from the IVS originator to the end user.

A Whole Picture of the Test