Introduction: When Observation Turns into Interpretation

In the 2002 cinematic masterpiece

Minority Report, the most striking concept wasn't just the prediction of events; it was the sophisticated system behind it—a world where vast amounts of visual signals were continuously interpreted, correlated, and acted upon without waiting for human instruction.

Today, that concept no longer feels entirely fictional. Modern video surveillance systems are undergoing a similar, fundamental transition. No longer confined to the passive roles of recording and playback, they are increasingly expected to interpret complex environments, filter relevance from noise, and support timely decisions. As the scale and complexity of video data grow exponentially, this shift has transformed Artificial Intelligence (AI) from a "supplementary feature" into a foundational requirement.

From Seeing to Understanding: Why AI Is No Longer Optional

Traditional surveillance models, built to capture footage and rely solely on human eyes for interpretation, simply do not scale in today’s landscape. Several factors have made the "capture-only" model obsolete:

- Massive Deployment Scales: Cameras are expanding across increasingly diverse and geographically distributed sites.

- Cognitive Overload: The sheer volume of continuous video streams far exceeds human monitoring capacity.

- Contextual Complexity: Security-relevant events are often subtle and highly dependent on context, making them easy to miss in a sea of raw data.

AI enables surveillance systems to move beyond visual capture toward

structured understanding. By utilizing object detection, attribute recognition, and behavior analysis, AI transforms raw video into actionable insight. Without AI, surveillance remains reactive—a digital witness after the fact. With AI, it becomes proactive, capable of real-time prioritization and decision support.

Edge AI and the Importance of Hybrid Architectural Design

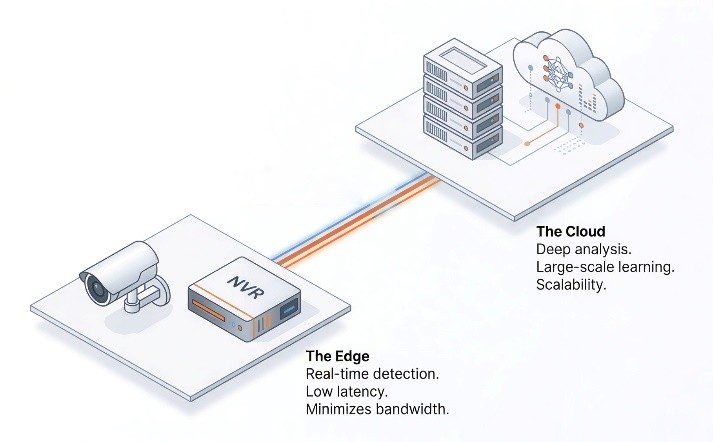

As AI integration accelerates, the strategic focus is shifting from what AI can do to where it operates. While early systems leaned heavily on the cloud, the rising costs of high-definition data transmission and complex data sovereignty regulations have highlighted the need for a more balanced approach.

In this context, Hybrid Architecture is emerging as the industry’s optimal solution. By combining the strengths of both edge and cloud, this model is set to become the standard security infrastructure for the AI era by 2026.

This architecture allows for a more efficient distributed computing structure. On-premise edge devices (cameras/NVRs) handle the first layer of real-time detection, minimizing bandwidth strain by only transmitting essential data. The cloud then performs a second layer of deep analysis and large-scale learning, significantly sharpening the accuracy of AI functions.

Ultimately, Hybrid Architecture provides users with the flexibility to deploy functions where they are most effective—whether for immediate onsite response or long-term analytical scalability. This synergy not only enhances performance but also maximizes TCO efficiency through high-performance, AI-native edge processing.

Wisenet 9: An AI-Native SoC for Edge Surveillance

Hanwha Vision’s Wisenet 9 exemplifies this AI-native approach. Designed specifically for the rigorous workloads of video surveillance, Wisenet 9 embeds AI processing directly into the chip architecture rather than treating it as an external software layer.

![]()

At the heart of the SoC, critical tasks are handled by a Dual NPU (Neural Processing Unit) structure, which creates optimized and separated processing pipelines for AI-driven image enhancement and deep-learning video analytics. This specialized hardware allows for concurrent execution: the camera can perform complex object classification while simultaneously maintaining high-fidelity image processing, all without degrading system reliability.

By managing AI inference, image processing, and video encoding natively through its Dual NPU, Wisenet 9 ensures consistent intelligence even in demanding conditions, such as ultra-low-light environments or high-traffic scenes where both visual clarity and analytic accuracy are paramount.

Beyond Accuracy: Trust as a Technical Requirement

As AI becomes deeply embedded in security workflows, the industry’s expectations are evolving. It is no longer enough for an AI to be "accurate" in a lab setting. To be operationally effective, surveillance AI must be:

- Consistent across varied and unpredictable environments.

- Stable under continuous, 24/7 operation.

- Interpretable so that human operators can understand and act upon the logic of the system.

Excessive false alarms or "black box" decision logic can quickly undermine operational trust. Consequently, the focus is shifting from how well AI detects to how reliably and responsibly it operates. This requires more than just software; it necessitates a combination of robust hardware architecture, high-quality training data, and strict governance frameworks.

AI Governance and the Role of ISO/IEC 42001

AI Governance and the Role of ISO/IEC 42001

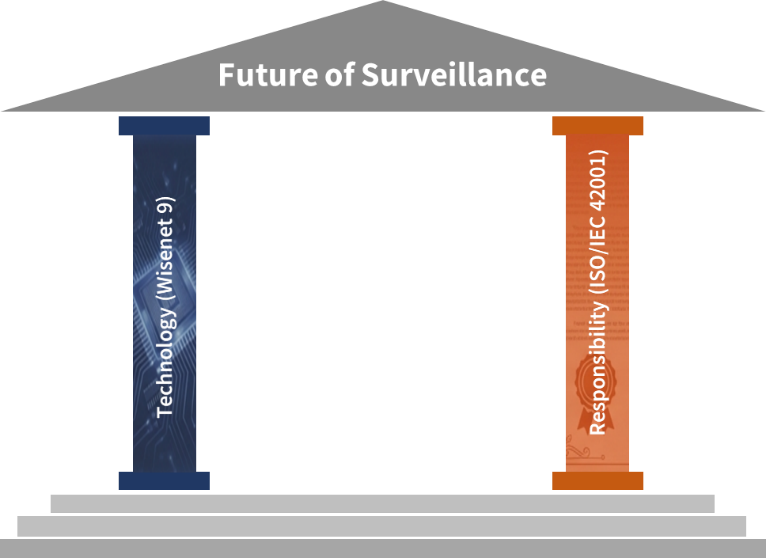

While hardware like Wisenet 9 provides the technical reliability, governance ensures the ethical and operational accountability of AI. This is where ISO/IEC 42001, the first international standard for AI Management Systems (AIMS), becomes essential.

Unlike standards that focus on specific algorithms, ISO/IEC 42001 defines how AI should be governed throughout its entire lifecycle—from initial development to ongoing monitoring. Hanwha Vision’s recent achievement of the ISO/IEC 42001 certification reflects a structured commitment to "Responsible AI". It ensures that advanced AI capabilities are underpinned by formal governance to maintain long-term trust, transparency, and compliance.

Industry Direction: Toward Responsible Intelligence

The future of the industry is being shaped by the convergence of AI-native architectures, high-quality data, and sustainable governance. Looking ahead, we expect to see:

- A total shift toward AI-native SoC architectures that enable true real-time edge intelligence.

- The widespread adoption of Hybrid Architectures to optimize bandwidth and analytical depth

- A governance-first approach where AI is deployed not just because it is powerful, but because it is accountable.

Conclusion: Intelligence by Design, Trust by Governance

Conclusion: Intelligence by Design, Trust by Governance

AI has successfully transformed video surveillance from passive observation into active interpretation. Yet, true progress is measured not just by capability, but by responsibility.

Through AI-native SoC designs like Wisenet 9 and the adoption of global governance frameworks like ISO/IEC 42001, we are redefining what "intelligent surveillance" means. It is no longer just about a camera that "sees"; it is about a system that understands context, supports human decision-making, and operates with unwavering accountability. As we move forward,

intelligence by design and

trust by governance will be the twin pillars defining the next era of security.

Learn more:

https://www.hanwhavision.com/en/technology/wisenet9/